|

And it sure is sending a non-trivial amount of error logs to ElasticCloud, which could explain the high % time used by write.Īt this point, my hypothesis for the root cause of high CPU usage and memory leak: use of winston-elasticsearch library coupled with the huge amount of logs being shipped through it. Timer for connections? Well, this application has create 256 connections to ElasticCloud.

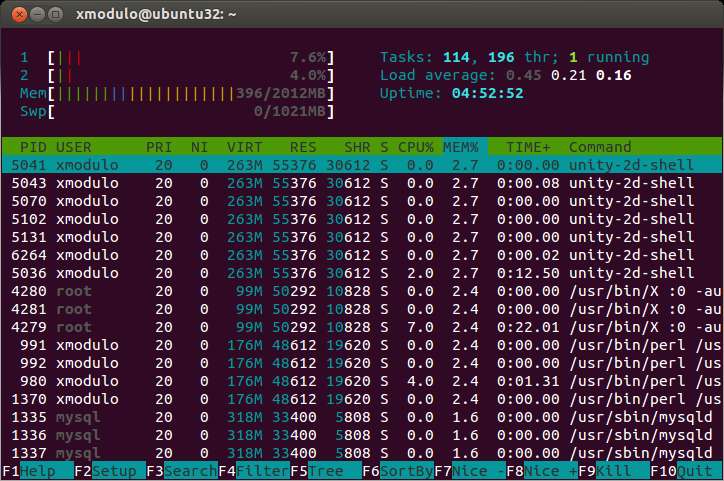

For example, a dedicated timer is used with each TCP connection to detect a possible connection timeout. On top of user code and 3rd-party libraries using timers, timers are also used internally by the Node.js platform itself. I came across an article on nodejs timers, which covers how timers are managed internally and one particular line stood out to me % time seconds usecs/call calls errors syscall For that, perf will be a better tool) # strace -p 25 -c (note: strace introduces a significant amount of performance overhead and is not recommended to run in production environment. Wanting to figure out which syscall is responsible for the bulk of CPU time, I attached strace to the node process and passed a -c flag to return a summary of syscalls traced. I also noticed that recv-q bytes is capped at 1101 and send-q is capped at 443. Having non-zero value under any of the queue columns indicates that data is not being processed fast enough, which could be due to an abnormally large volume of data or something is slowing down the processing. Tcp 1101 0 10.189.2.170:34586 some-ip:9243 ESTABLISHED 19/nodeĪs the name suggests, recv-q and send-q are the receive and send queues/buffer for a particular socket and indicates the number of bytes in the queue/buffer.

# netstat -ntp Active Internet connections (w/o servers ) Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name I also used netstat to get information on the recv-q and send-q on each socket. Notice that number of connections is capped at 256? Definitely not a coincidence and some code somewhere is somehow limiting the max number of connections. It also reduced the amount of logs produced.

Increasing the duration between scrape slowed down the rate of increase of connections. Turns out it did not start with 256 connections but instead increases over time. I restarted the application multiple times while varying the duration between scrape and observed the number of connections created. Indeed, we do use winston-elasticsearch library for establishing transport of logs to ElasticCloud. A quick Google search revealed that port 9243 is commonly used by ElasticCloud. Įxcluding the header row and the only listening connection, there’s a total of 256 connections, all to a bunch of IP addresses on port 9243. # lsof -i COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed